Integrating real-world site data for 3D modeling

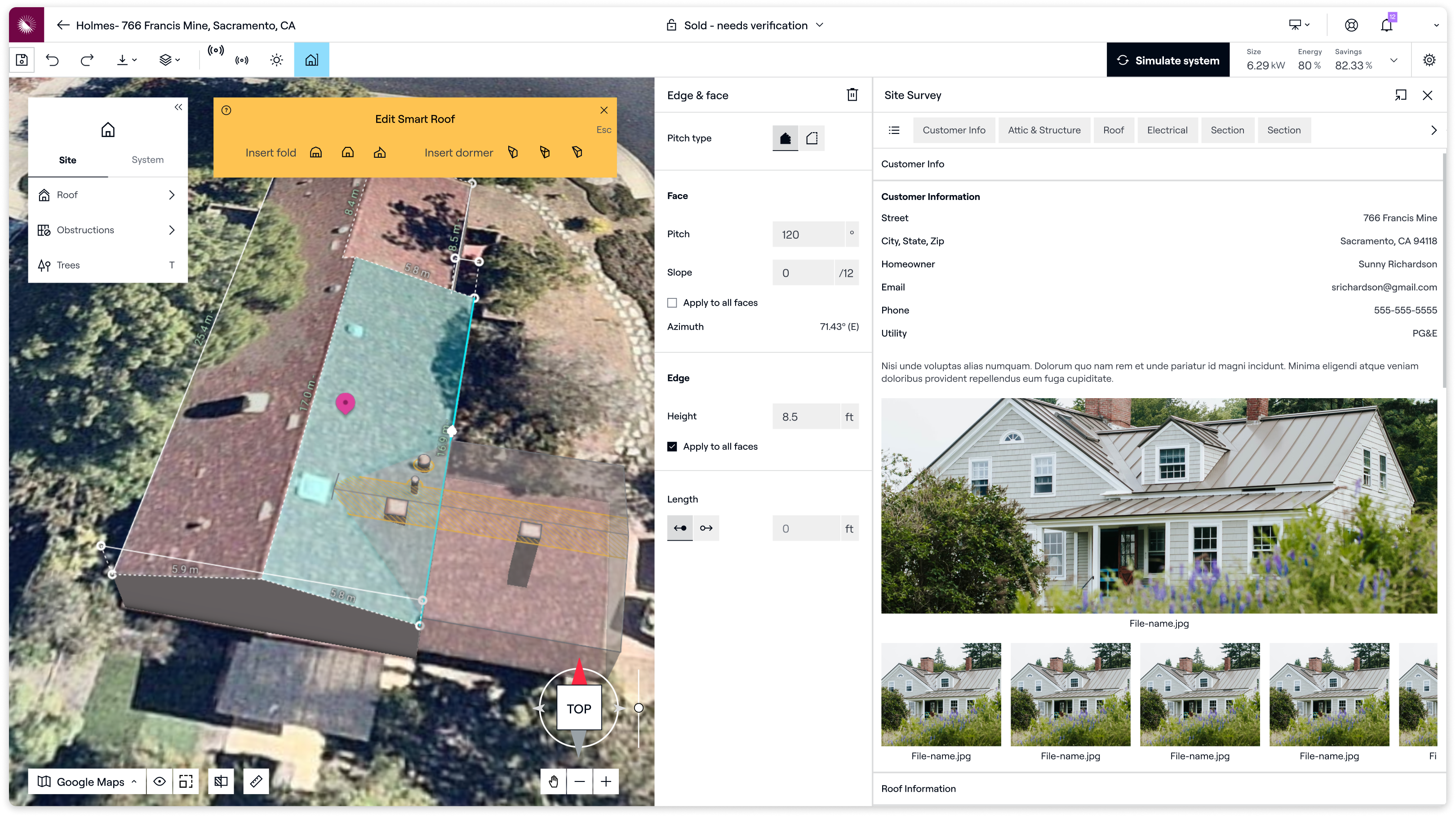

Solar designers using Aurora's 3D modeling tool had to manually sift through 50-100 page PDFs to find site photos and measurements while building accurate designs. I led rapid discovery and design to bring that data directly into the modeling workflow: an extensible drawer interface with spatial 3D canvas objects that connected real-world site data to the model itself. The MVP shipped to pilot customers and earned a leadership-approved north star vision for full platform integration with external site data sources.

- 0-1 feature from problem/concept to MVP

- Strong positive response from pilot customers and internal stakeholders.

- The work unlocked a broader strategic direction for Aurora, with the north star vision for platform-wide site data integration demonstrating that the MVP had validated both the user need and the product direction.

- Playbook development for future partner data integration.

Designing a solar system for a residential installation requires precision. Before finalizing panel placement, roof measurements, and system specs, designers need to verify their 3D model against real-world data collected on site: photos, measurements, physical conditions. That data existed, but it lived outside Aurora. Designers were working from PDFs sometimes 50-100 pages long, manually hunting for the right photo or measurement while keeping their 3D model in mind.

The cognitive load was significant. Not just finding the data, but mentally connecting what they were seeing in a flat document to a three-dimensional model of a real roof. Aurora had the opportunity to change this by integrating site survey data directly into the platform where designers did their work.

I joined the team on loan from my usual product pod, brought in to run rapid product and design discovery and get the team to an MVP as fast as possible. The goal was velocity without sacrificing the quality of the problem framing.

Without time for primary user research, I facilitated a focused discovery sprint with the cross-functional team and the people inside Aurora who had the deepest context on this problem space.

The key insight that emerged: there were two distinct but related problems. The first was navigation. Users couldn't efficiently find the specific photo or measurement they needed in a large, unstructured data set. The second was association. Even when they found the right data, they struggled to mentally connect it to the 3D model they were building. Same root cause, two different design problems requiring two distinct solutions.

Discovery sprint outcome:

Design principle 1

Improve the navigability of the site survey data while performing the tasks needed to edit the model

Design principle 2

Improve the association between site survey data and Aurora 3d model

Design principle 3

Reduce the cognitive load for the Aurora designer, possibly by shifting work to the surveyor

The design challenge wasn't simply adding a data panel to the interface. It required doing so in a way that didn't collapse the 3D workspace users depended on, while giving them genuine spatial understanding of how site data connected to their model.

Designers work in a complex, information-dense 3D canvas. Any new UI element competes for screen real estate and attention. The solution needed to be present when needed, collapsible when not, and flexible enough to adapt to how individual designers preferred to work.

The solution addressed both problems identified in discovery through two connected features.

An extensible, reposition-able drawer activated from the top toolbar. The drawer could be expanded, collapsed, or popped out into a separate window, giving designers full control over how they shaped their workspace. This flexibility was my core design invention: not just a panel, but a workspace-aware tool that adapted to the user rather than imposing a fixed layout.

A compact filter navigation inside the drawer using multilevel filter chips, letting users cut through large data sets efficiently by type, location, or category. What previously required scrolling through a 100-page PDF could now be navigated in seconds.

New 3D canvas objects that spatially located photos and measurements directly within the model. Rather than asking designers to mentally map a flat document onto a 3D space, these objects anchored real-world data to the specific part of the roof or structure it described, closing the gap between site reality and digital model.

I generated multiple solution concepts and facilitated the team's evaluation before arriving at a deliberate sequencing decision: build the navigation drawer first, 3D objects second.

The 3D canvas objects solved the deeper pain point, the mental association problem, but required significantly more engineering effort and coordination across teams.

The navigation drawer solved a real, immediate problem with substantially lower build complexity. Shipping it first would get value to users faster, validate the core concept with the pilot, and give the team a foundation to build the 3D object layer on top of. The sequencing decision was a deliberate tradeoff: highest user value per unit of engineering effort first, with the harder problem following once the foundation was established.

An MVP covering the drawer interface and filter navigation, piloted with Aurora customers. The 3D canvas objects were part of the north star vision that followed, a leadership-approved roadmap for how Aurora would become fully integrated with external site data sources across the platform.

The discovery sprint was the right call given the timeline, but substituting internal SME knowledge for direct user research carries real risk. You end up designing for your colleagues' model of the user rather than the user themselves. Given more time, I would have found a way to run even two or three quick sessions with active designers before locking in the solution direction. The north star vision especially would have benefited from user input earlier.